Over the last week or so I have been reading about/investigating this post's title matter. Some quotes from various papers' abstracts on the matter are:

In addition to this there seem to be numerous blogs, articles online etc. which also suggest that forex seasonality is a real phenomenon, so I thought I'd have a quick look into it myself.

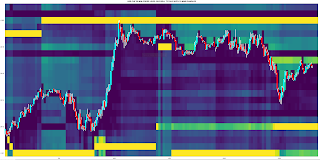

Rather than do a full, statistical analysis I have used the following

Octave function

clear all ;

data = dlmread( '/home/path/to/hourly_currency_index_g_mults' ) ;

## aud_x = x( 1) ; cad_x = x( 2 ) ; chf_x = x( 3 ) ; eur_x = x( 4 ) ; gbp_x = x( 5 ) ; hkd_x = x( 6 ) ;

## jpy_x = x( 7 ) ; nzd_x = x( 8 ) ; sgd_x = x( 9 ) ; usd_x = x( 10 ) ; ## plus 6 for ix to account for date cols

## first 6 cols are YYYY MM DD HH-GMT HH-BST HH-EST

logged_data = data ; logged_data( : , 7 : end ) = log( logged_data( : , 7 : end ) ) ;

## get the days. The days of the week are numbered 1–7 with the first day being Sunday.

days_num = weekday( datenum( [ data(:,1) , data(:,2) , data(:,3) , data(:,5) ] ) ) ; ## BST time

start = input( 'Do you want to enter start date? Y or N ' , 's' ) ;

if ( strcmp( tolower( start ) , 'y' ) )

year_start = input( 'Enter year YYYY: ' ) ;

month_start = input( 'Enter month MM: ' ) ;

day_start = input( 'Enter day date: ' ) ;

delete_ix = find( (logged_data(:,1)==year_start) .* (logged_data(:,2)==month_start) .* (logged_data(:,3)==day_start) ) ;

if ( !isempty( delete_ix ) )

logged_data( 1 : delete_ix , : ) = [] ; days_num( 1 : delete_ix , : ) = [] ;

else

disp( 'Invalid start date, so charts will show all data.' ) ;

endif

endif

## create individual day indices

monday_indices = [ ( 0 : 1 : 23 )' , zeros( 24 , 10 ) ] ;

tuesday_indices = monday_indices ;

wednesday_indices = monday_indices ;

thursday_indices = monday_indices ;

friday_indices = monday_indices ;

alldays_indices = monday_indices ;

running_denom = zeros( 24 , 10 ) ;

for jj = 0 : 23

ix = find( ( days_num == 2 ) .* ( logged_data( : , 5 ) == jj ) ) ;

running_denom( jj + 1 , : ) = running_denom( jj + 1 , : ) + numel( ix ) ;

monday_indices( jj + 1 , 2 : end ) = sum( logged_data( ix , 7 : end ) , 1 ) ./ numel( ix ) ;

alldays_indices( jj + 1 , 2 : end ) = sum( logged_data( ix , 7 : end ) , 1 ) ;

endfor

for jj = 0 : 23

ix = find( ( days_num == 3 ) .* ( logged_data( : , 5 ) == jj ) ) ;

running_denom( jj + 1 , : ) = running_denom( jj + 1 , : ) + numel( ix ) ;

tuesday_indices( jj + 1 , 2 : end ) = sum( logged_data( ix , 7 : end ) , 1 ) ./ numel( ix ) ;

alldays_indices( jj + 1 , 2 : end ) = alldays_indices( jj + 1 , 2 : end ) .+ sum( logged_data( ix , 7 : end ) , 1 ) ;

endfor

for jj = 0 : 23

ix = find( ( days_num == 4 ) .* ( logged_data( : , 5 ) == jj ) ) ;

running_denom( jj + 1 , : ) = running_denom( jj + 1 , : ) + numel( ix ) ;

wednesday_indices( jj + 1 , 2 : end ) = sum( logged_data( ix , 7 : end ) , 1 ) ./ numel( ix ) ;

alldays_indices( jj + 1 , 2 : end ) = alldays_indices( jj + 1 , 2 : end ) .+ sum( logged_data( ix , 7 : end ) , 1 ) ;

endfor

for jj = 0 : 23

ix = find( ( days_num == 5 ) .* ( logged_data( : , 5 ) == jj ) ) ;

running_denom( jj + 1 , : ) = running_denom( jj + 1 , : ) + numel( ix ) ;

thursday_indices( jj + 1 , 2 : end ) = sum( logged_data( ix , 7 : end ) , 1 ) ./ numel( ix ) ;

alldays_indices( jj + 1 , 2 : end ) = alldays_indices( jj + 1 , 2 : end ) .+ sum( logged_data( ix , 7 : end ) , 1 ) ;

endfor

for jj = 0 : 20 ## market closes at 17:00 EST

ix = find( ( days_num == 6 ) .* ( logged_data( : , 5 ) == jj ) ) ;

running_denom( jj + 1 , : ) = running_denom( jj + 1 , : ) + numel( ix ) ;

friday_indices( jj + 1 , 2 : end ) = sum( logged_data( ix , 7 : end ) , 1 ) ./ numel( ix ) ;

alldays_indices( jj + 1 , 2 : end ) = alldays_indices( jj + 1 , 2 : end ) .+ sum( logged_data( ix , 7 : end ) , 1 ) ;

endfor

alldays_indices( : , 2 : end ) = alldays_indices( : , 2 : end ) ./ running_denom ;

monday_indices( : , 2 : end ) = cumsum( monday_indices( : , 2 : end ) ) ;

tuesday_indices( : , 2 : end ) = cumsum( tuesday_indices( : , 2 : end ) ) ;

wednesday_indices( : , 2 : end ) = cumsum( wednesday_indices( : , 2 : end ) ) ;

thursday_indices( : , 2 : end ) = cumsum( thursday_indices( : , 2 : end ) ) ;

friday_indices( : , 2 : end ) = cumsum( friday_indices( : , 2 : end ) ) ;

alldays_indices( : , 2 : end ) = cumsum( alldays_indices( : , 2 : end ) ) ;

if ( ishandle(1) )

clf(1) ;

endif

figure( 1 ) ;

h1 = axes( 'position' , [ 0.03 , 0.54 , 0.30 , 0.43 ] ) ; plot( monday_indices(:,3) , 'k' , 'linewidth' , 2 , ...

monday_indices(:,4) , 'c' , 'linewidth' , 2 , ...

monday_indices(:,5) , 'b' , 'linewidth' , 2 , ...

monday_indices(:,6) , 'r' , 'linewidth' , 2 , ...

monday_indices(:,11) , 'g' , 'linewidth' , 2 ) ; xlim([0 23]) ; grid minor on ; title( 'MONDAY' ) ;

legend( 'CAD' , 'CHF' , 'EUR' , 'GBP' , 'USD' , 'location' , 'north' , 'orientation' , 'horizontal' ) ;

vline( 7 , 'r' ) ; vline( 12 , 'g' ) ;

h2 = axes( 'position' , [ 0.36 , 0.54 , 0.30 , 0.43 ] ) ; plot( tuesday_indices(:,3) , 'k' , 'linewidth' , 2 , ...

tuesday_indices(:,4) , 'c' , 'linewidth' , 2 , ...

tuesday_indices(:,5) , 'b' , 'linewidth' , 2 , ...

tuesday_indices(:,6) , 'r' , 'linewidth' , 2 , ...

tuesday_indices(:,11) , 'g' , 'linewidth' , 2 ) ; xlim([0 23]) ; grid minor on ; title( 'TUESDAY' ) ;

legend( 'CAD' , 'CHF' , 'EUR' , 'GBP' , 'USD' , 'location' , 'north' , 'orientation' , 'horizontal' ) ;

vline( 7 , 'r' ) ; vline( 12 , 'g' ) ;

h3 = axes( 'position' , [ 0.69 , 0.54 , 0.30 , 0.43 ] ) ; plot( wednesday_indices(:,3) , 'k' , 'linewidth' , 2 , ...

wednesday_indices(:,4) , 'c' , 'linewidth' , 2 , ...

wednesday_indices(:,5) , 'b' , 'linewidth' , 2 , ...

wednesday_indices(:,6) , 'r' , 'linewidth' , 2 , ...

wednesday_indices(:,11) , 'g' , 'linewidth' , 2 ) ; xlim([0 23]) ; grid minor on ; title( 'WEDNESDAY' ) ;

legend( 'CAD' , 'CHF' , 'EUR' , 'GBP' , 'USD' , 'location' , 'north' , 'orientation' , 'horizontal' ) ;

vline( 7 , 'r' ) ; vline( 12 , 'g' ) ;

h4 = axes( 'position' , [ 0.03 , 0.04 , 0.30 , 0.43 ] ) ; plot( thursday_indices(:,3) , 'k' , 'linewidth' , 2 , ...

thursday_indices(:,4) , 'c' , 'linewidth' , 2 , ...

thursday_indices(:,5) , 'b' , 'linewidth' , 2 , ...

thursday_indices(:,6) , 'r' , 'linewidth' , 2 , ...

thursday_indices(:,11) , 'g' , 'linewidth' , 2 ) ; xlim([0 23]) ; grid minor on ; title( 'THURSDAY' ) ;

legend( 'CAD' , 'CHF' , 'EUR' , 'GBP' , 'USD' , 'location' , 'north' , 'orientation' , 'horizontal' ) ;

vline( 7 , 'r' ) ; vline( 12 , 'g' ) ;

h5 = axes( 'position' , [ 0.36 , 0.04 , 0.30 , 0.43 ] ) ; plot( friday_indices(:,3) , 'k' , 'linewidth' , 2 , ...

friday_indices(:,4) , 'c' , 'linewidth' , 2 , ...

friday_indices(:,5) , 'b' , 'linewidth' , 2 , ...

friday_indices(:,6) , 'r' , 'linewidth' , 2 , ...

friday_indices(:,11) , 'g' , 'linewidth' , 2 ) ; xlim([0 21]) ; grid minor on ; title( 'FRIDAY' ) ;

legend( 'CAD' , 'CHF' , 'EUR' , 'GBP' , 'USD' , 'location' , 'north' , 'orientation' , 'horizontal' ) ;

vline( 7 , 'r' ) ; vline( 12 , 'g' ) ;

h6 = axes( 'position' , [ 0.69 , 0.04 , 0.30 , 0.43 ] ) ; plot( alldays_indices(:,3) , 'k' , 'linewidth' , 2 , ...

alldays_indices(:,4) , 'c' , 'linewidth' , 2 , ...

alldays_indices(:,5) , 'b' , 'linewidth' , 2 , ...

alldays_indices(:,6) , 'r' , 'linewidth' , 2 , ...

alldays_indices(:,11) , 'g' , 'linewidth' , 2 ) ; xlim([0 23]) ; grid minor on ; title( 'ALL DAYS COMBINED' ) ;

legend( 'CAD' , 'CHF' , 'EUR' , 'GBP' , 'USD' , 'location' , 'north' , 'orientation' , 'horizontal' ) ;

vline( 7 , 'r' ) ; vline( 12 , 'g' ) ;

if ( ishandle(2) )

clf(2) ;

endif

figure( 2 ) ;

h1 = axes( 'position' , [ 0.03 , 0.54 , 0.30 , 0.43 ] ) ; plot( monday_indices(:,2) , 'k' , 'linewidth' , 2 , ...

monday_indices(:,7) , 'c' , 'linewidth' , 2 , ...

monday_indices(:,8) , 'b' , 'linewidth' , 2 , ...

monday_indices(:,9) , 'r' , 'linewidth' , 2 , ...

monday_indices(:,10) , 'g' , 'linewidth' , 2 ) ; xlim([0 23]) ; grid minor on ; title( 'MONDAY' ) ;

legend( 'AUD' , 'HKD' , 'JPY' , 'NZD' , 'SGD' , 'location' , 'north' , 'orientation' , 'horizontal' ) ;

vline( 7 , 'r' ) ; vline( 12 , 'g' ) ;

h2 = axes( 'position' , [ 0.36 , 0.54 , 0.30 , 0.43 ] ) ; plot( tuesday_indices(:,2) , 'k' , 'linewidth' , 2 , ...

tuesday_indices(:,7) , 'c' , 'linewidth' , 2 , ...

tuesday_indices(:,8) , 'b' , 'linewidth' , 2 , ...

tuesday_indices(:,9) , 'r' , 'linewidth' , 2 , ...

tuesday_indices(:,10) , 'g' , 'linewidth' , 2 ) ; xlim([0 23]) ; grid minor on ; title( 'TUESDAY' ) ;

legend( 'AUD' , 'HKD' , 'JPY' , 'NZD' , 'SGD' , 'location' , 'north' , 'orientation' , 'horizontal' ) ;

vline( 7 , 'r' ) ; vline( 12 , 'g' ) ;

h3 = axes( 'position' , [ 0.69 , 0.54 , 0.30 , 0.43 ] ) ; plot( wednesday_indices(:,2) , 'k' , 'linewidth' , 2 , ...

wednesday_indices(:,7) , 'c' , 'linewidth' , 2 , ...

wednesday_indices(:,8) , 'b' , 'linewidth' , 2 , ...

wednesday_indices(:,9) , 'r' , 'linewidth' , 2 , ...

wednesday_indices(:,10) , 'g' , 'linewidth' , 2 ) ; xlim([0 23]) ; grid minor on ; title( 'WEDNESDAY' ) ;

legend( 'AUD' , 'HKD' , 'JPY' , 'NZD' , 'SGD' , 'location' , 'north' , 'orientation' , 'horizontal' ) ;

vline( 7 , 'r' ) ; vline( 12 , 'g' ) ;

h4 = axes( 'position' , [ 0.03 , 0.04 , 0.30 , 0.43 ] ) ; plot( thursday_indices(:,2) , 'k' , 'linewidth' , 2 , ...

thursday_indices(:,7) , 'c' , 'linewidth' , 2 , ...

thursday_indices(:,8) , 'b' , 'linewidth' , 2 , ...

thursday_indices(:,9) , 'r' , 'linewidth' , 2 , ...

thursday_indices(:,10) , 'g' , 'linewidth' , 2 ) ; xlim([0 23]) ; grid minor on ; title( 'THURSDAY' ) ;

legend( 'AUD' , 'HKD' , 'JPY' , 'NZD' , 'SGD' , 'location' , 'north' , 'orientation' , 'horizontal' ) ;

vline( 7 , 'r' ) ; vline( 12 , 'g' ) ;

h5 = axes( 'position' , [ 0.36 , 0.04 , 0.30 , 0.43 ] ) ; plot( friday_indices(:,2) , 'k' , 'linewidth' , 2 , ...

friday_indices(:,7) , 'c' , 'linewidth' , 2 , ...

friday_indices(:,8) , 'b' , 'linewidth' , 2 , ...

friday_indices(:,9) , 'r' , 'linewidth' , 2 , ...

friday_indices(:,10) , 'g' , 'linewidth' , 2 ) ; xlim([0 21]) ; grid minor on ; title( 'FRIDAY' ) ;

legend( 'AUD' , 'HKD' , 'JPY' , 'NZD' , 'SGD' , 'location' , 'north' , 'orientation' , 'horizontal' ) ;

vline( 7 , 'r' ) ; vline( 12 , 'g' ) ;

h6 = axes( 'position' , [ 0.69 , 0.04 , 0.30 , 0.43 ] ) ; plot( alldays_indices(:,2) , 'k' , 'linewidth' , 2 , ...

alldays_indices(:,7) , 'c' , 'linewidth' , 2 , ...

alldays_indices(:,8) , 'b' , 'linewidth' , 2 , ...

alldays_indices(:,9) , 'r' , 'linewidth' , 2 , ...

alldays_indices(:,10) , 'g' , 'linewidth' , 2 ) ; xlim([0 23]) ; grid minor on ; title( 'ALL DAYS COMBINED' ) ;

legend( 'AUD' , 'HKD' , 'JPY' , 'NZD' , 'SGD' , 'location' , 'north' , 'orientation' , 'horizontal' ) ;

vline( 7 , 'r' ) ; vline( 12 , 'g' ) ;